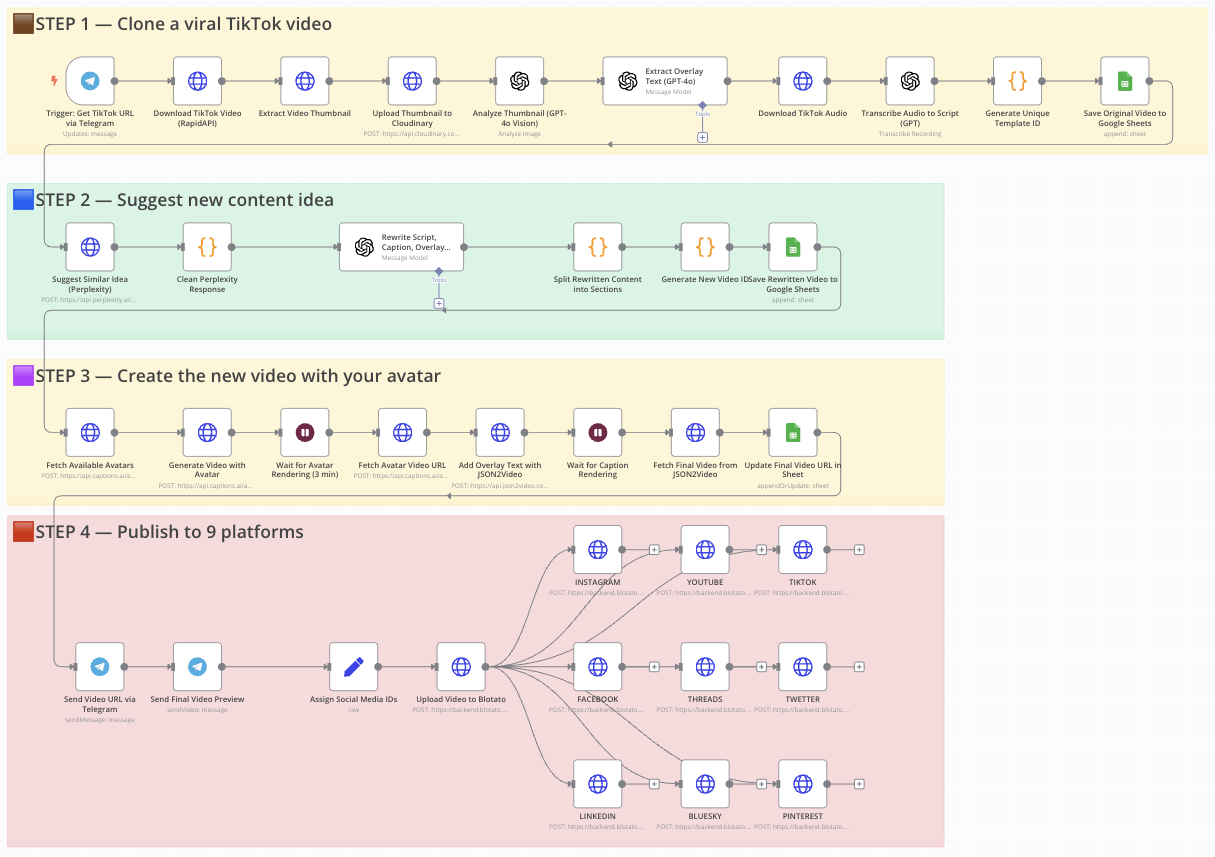

Clone viral TikToks with AI avatars & auto-post to 9 platforms using Perplexity & Blotato

Clone a viral TikTok with AI and auto-post it to 9 platforms using Perplexity & Blotato

Who is this for?

This workflow is perfect for:

- Content creators looking to repurpose viral content

- Social media managers who want to scale short-form content across multiple platforms

- Entrepreneurs and marketers aiming to save time and boost visibility with AI-powered automation

What problem is this workflow solving?

Reproducing viral video formats with your own branding and pushing them to multiple platforms is time-consuming and hard to scale. This workflow solves that by:

- Cloning a viral TikTok video’s structure

- Generating a new version with your avatar

- Rewriting the script, caption, and overlay text

- Auto-posting it to 9 social media platforms — without manual uploads

What this workflow does

From a simple Telegram message with a TikTok link, the workflow:

- Downloads a TikTok video and extracts its thumbnail, audio, and caption

- Transcribes the audio and saves original text into Google Sheets

- Uses Perplexity AI to suggest a new content idea in the same niche

- Rewrites the script, caption, and overlay using GPT-4o

- Generates a new video with your avatar using Captions.ai

- Adds subtitles and overlay text with JSON2Video

- Saves metadata to Google Sheets for tracking

- Sends the final video to Telegram for preview

- Auto-publishes the video to Instagram, YouTube, TikTok, Facebook, LinkedIn, Threads, X (Twitter), Pinterest, and Bluesky via Blotato

Setup

- Connect your Telegram bot to the trigger node.

- Add your OpenAI, Perplexity, Cloudinary, Captions.ai, and Blotato API keys.

- Make sure your Google Sheet is ready with the appropriate columns.

- Replace the default avatar name in the Captions.ai node with yours.

- Fill in your social media account IDs in the "Assign Platform IDs" node.

- Test by sending a TikTok URL to your Telegram bot.

How to customize this workflow to your needs

- Change avatar output style: adjust resolution, voice, or avatar ID.

- Refine script structure: tweak GPT instructions for different tone/format.

- Swap Perplexity with ChatGPT or Claude if needed.

- Filter by platform: disable any Blotato nodes you don’t need.

- Add approval step: insert a Telegram confirmation node before publishing.

- Adjust subtitle style or overlay text font in JSON2Video.

📄 Documentation: Notion Guide

Need help customizing?

n8n Workflow: Telegram Triggered AI Content Generation and Posting

This n8n workflow automates the process of generating content using OpenAI, storing details in Google Sheets, and notifying via Telegram. It's designed to streamline content creation and management, potentially for social media or blog posts.

What it does

This workflow is triggered by a message in Telegram and performs the following steps:

- Telegram Trigger: Listens for incoming messages in a configured Telegram chat.

- Edit Fields (Set): Takes the incoming Telegram message and sets it as an input for subsequent AI processing.

- OpenAI: Uses the message received from Telegram as a prompt to generate content (e.g., text, ideas, summaries) via the OpenAI API.

- Google Sheets: Appends a new row to a specified Google Sheet, likely to log the generated content, the original prompt, or other relevant details.

- Telegram: Sends a confirmation or the generated content back to the Telegram chat.

- Wait: Introduces a pause in the workflow, potentially to space out actions or allow for manual review (though no explicit manual review step is shown).

- HTTP Request: Makes an HTTP request, likely to an external service or API, possibly for further processing, publishing, or data synchronization.

- Code: Executes custom JavaScript code, which could be used for data manipulation, conditional logic, or interacting with other services not directly supported by n8n nodes.

Prerequisites/Requirements

To use this workflow, you will need:

- Telegram Bot Token: For the Telegram Trigger and Telegram nodes to interact with your Telegram bot.

- Google Sheets API Access: Configured with appropriate permissions to write to your desired Google Sheet.

- OpenAI API Key: To access the OpenAI content generation services.

- Credentials for any service targeted by the HTTP Request node: Depending on what the HTTP Request node is configured to do.

Setup/Usage

- Import the workflow: Import the provided JSON into your n8n instance.

- Configure Credentials:

- Telegram: Set up your Telegram Bot credential for both the "Telegram Trigger" and "Telegram" nodes.

- Google Sheets: Configure your Google Sheets credential.

- OpenAI: Set up your OpenAI API Key credential.

- HTTP Request: Configure any necessary credentials or API keys for the service targeted by the HTTP Request node.

- Customize Nodes:

- Telegram Trigger: Ensure it's listening to the correct chat or command.

- Edit Fields (Set): Verify the field

promptis correctly set from the Telegram message. - OpenAI: Adjust the model, prompt, and other parameters as needed for your content generation requirements.

- Google Sheets: Specify the Spreadsheet ID, Sheet Name, and map the data to be written from the OpenAI output.

- Telegram: Customize the message sent back to Telegram.

- Wait: Adjust the wait duration if needed.

- HTTP Request: Configure the URL, method, headers, and body according to the external API you wish to call.

- Code: Modify the JavaScript code to fit your specific data processing or integration needs.

- Activate the workflow: Once all nodes are configured, activate the workflow. It will start listening for Telegram messages.

Related Templates

Track competitor SEO keywords with Decodo + GPT-4.1-mini + Google Sheets

This workflow automates competitor keyword research using OpenAI LLM and Decodo for intelligent web scraping. Who this is for SEO specialists, content strategists, and growth marketers who want to automate keyword research and competitive intelligence. Marketing analysts managing multiple clients or websites who need consistent SEO tracking without manual data pulls. Agencies or automation engineers using Google Sheets as an SEO data dashboard for keyword monitoring and reporting. What problem this workflow solves Tracking competitor keywords manually is slow and inconsistent. Most SEO tools provide limited API access or lack contextual keyword analysis. This workflow solves that by: Automatically scraping any competitor’s webpage with Decodo. Using OpenAI GPT-4.1-mini to interpret keyword intent, density, and semantic focus. Storing structured keyword insights directly in Google Sheets for ongoing tracking and trend analysis. What this workflow does Trigger — Manually start the workflow or schedule it to run periodically. Input Setup — Define the website URL and target country (e.g., https://dev.to, france). Data Scraping (Decodo) — Fetch competitor web content and metadata. Keyword Analysis (OpenAI GPT-4.1-mini) Extract primary and secondary keywords. Identify focus topics and semantic entities. Generate a keyword density summary and SEO strength score. Recommend optimization and internal linking opportunities. Data Structuring — Clean and convert GPT output into JSON format. Data Storage (Google Sheets) — Append structured keyword data to a Google Sheet for long-term tracking. Setup Prerequisites If you are new to Decode, please signup on this link visit.decodo.com n8n account with workflow editor access Decodo API credentials OpenAI API key Google Sheets account connected via OAuth2 Make sure to install the Decodo Community node. Create a Google Sheet Add columns for: primarykeywords, seostrengthscore, keyworddensity_summary, etc. Share with your n8n Google account. Connect Credentials Add credentials for: Decodo API credentials - You need to register, login and obtain the Basic Authentication Token via Decodo Dashboard OpenAI API (for GPT-4o-mini) Google Sheets OAuth2 Configure Input Fields Edit the “Set Input Fields” node to set your target site and region. Run the Workflow Click Execute Workflow in n8n. View structured results in your connected Google Sheet. How to customize this workflow Track Multiple Competitors → Use a Google Sheet or CSV list of URLs; loop through them using the Split In Batches node. Add Language Detection → Add a Gemini or GPT node before keyword analysis to detect content language and adjust prompts. Enhance the SEO Report → Expand the GPT prompt to include backlink insights, metadata optimization, or readability checks. Integrate Visualization → Connect your Google Sheet to Looker Studio for SEO performance dashboards. Schedule Auto-Runs → Use the Cron Node to run weekly or monthly for competitor keyword refreshes. Summary This workflow automates competitor keyword research using: Decodo for intelligent web scraping OpenAI GPT-4.1-mini for keyword and SEO analysis Google Sheets for live tracking and reporting It’s a complete AI-powered SEO intelligence pipeline ideal for teams that want actionable insights on keyword gaps, optimization opportunities, and content focus trends, without relying on expensive SEO SaaS tools.

Automate RSS to social media pipeline with AI, Airtable & GetLate for multiple platforms

Overview Automates your complete social media content pipeline: sources articles from Wallabag RSS, generates platform-specific posts with AI, creates contextual images, and publishes via GetLate API. Built with 63 nodes across two workflows to handle LinkedIn, Instagram, and Bluesky—with easy expansion to more platforms. Ideal for: Content marketers, solo creators, agencies, and community managers maintaining a consistent multi-platform presence with minimal manual effort. How It Works Two-Workflow Architecture: Content Aggregation Workflow Monitors Wallabag RSS feeds for tagged articles (to-share-linkedin, to-share-instagram, etc.) Extracts and converts content from HTML to Markdown Stores structured data in Airtable with platform assignment AI Generation & Publishing Workflow Scheduled trigger queries Airtable for unpublished content Routes to platform-specific sub-workflows (LinkedIn, Instagram, Bluesky) LLM generates optimized post text and image prompts based on custom brand parameters Optionally generates AI images and hosts them on Imgbb CDN Publishes via GetLate API (immediate or draft mode) Updates Airtable with publication status and metadata Key Features: Tag-based content routing using Wallabag's native system Swappable AI providers (Groq, OpenAI, Anthropic) Platform-specific optimization (tone, length, hashtags, CTAs) Modular design—duplicate sub-workflows to add new platforms in \~30 minutes Centralized Airtable tracking with 17 data points per post Set Up Steps Setup time: \~45-60 minutes for initial configuration Create accounts and get API keys (\~15 min) Wallabag (with RSS feeds enabled) GetLate (social media publishing) Airtable (create base with provided schema—see sticky notes) LLM provider (Groq, OpenAI, or Anthropic) Image service (Hugging Face, Fal.ai, or Stability AI) Imgbb (image hosting) Configure n8n credentials (\~10 min) Add all API keys in n8n's credential manager Detailed credential setup instructions in workflow sticky notes Set up Airtable database (\~10 min) Create "RSS Feed - Content Store" base Add 19 required fields (schema provided in workflow sticky notes) Get Airtable base ID and API key Customize brand prompts (\~15 min) Edit "Set Custom SMCG Prompt" node for each platform Define brand voice, tone, goals, audience, and image preferences Platform-specific examples provided in sticky notes Configure platform settings (\~10 min) Set GetLate account IDs for each platform Enable/disable image generation per platform Choose immediate publish vs. draft mode Adjust schedule trigger frequency Test and deploy Tag test articles in Wallabag Monitor the first few executions in draft mode Activate workflows when satisfied with the output Important: This is a proof-of-concept template. Test thoroughly with draft mode before production use. Detailed setup instructions, troubleshooting tips, and customization guidance are in the workflow's sticky notes. Technical Details 63 nodes: 9 Airtable operations, 8 HTTP requests, 7 code nodes, 3 LangChain LLM chains, 3 RSS triggers, 3 GetLate publishers Supports: Multiple LLM providers, multiple image generation services, unlimited platforms via modular architecture Tracking: 17 metadata fields per post, including publish status, applied parameters, character counts, hashtags, image URLs Prerequisites n8n instance (self-hosted or cloud) Accounts: Wallabag, GetLate, Airtable, LLM provider, image generation service, Imgbb Basic understanding of n8n workflows and credential configuration Time to customize prompts for your brand voice Detailed documentation, Airtable schema, prompt examples, and troubleshooting guides are in the workflow's sticky notes. Category Tags social-media-automation, ai-content-generation, rss-to-social, multi-platform-posting, getlate-api, airtable-database, langchain, workflow-automation, content-marketing

Ai website scraper & company intelligence

AI Website Scraper & Company Intelligence Description This workflow automates the process of transforming any website URL into a structured, intelligent company profile. It's triggered by a form, allowing a user to submit a website and choose between a "basic" or "deep" scrape. The workflow extracts key information (mission, services, contacts, SEO keywords), stores it in a structured Supabase database, and archives a full JSON backup to Google Drive. It also features a secondary AI agent that automatically finds and saves competitors for each company, building a rich, interconnected database of company intelligence. --- Quick Implementation Steps Import the Workflow: Import the provided JSON file into your n8n instance. Install Custom Community Node: You must install the community node from: https://www.npmjs.com/package/n8n-nodes-crawl-and-scrape FIRECRAWL N8N Documentation https://docs.firecrawl.dev/developer-guides/workflow-automation/n8n Install Additional Nodes: n8n-nodes-crawl-and-scrape and n8n-nodes-mcp fire crawl mcp . Set up Credentials: Create credentials in n8n for FIRE CRAWL API,Supabase, Mistral AI, and Google Drive. Configure API Key (CRITICAL): Open the Web Search tool node. Go to Parameters → Headers and replace the hardcoded Tavily AI API key with your own. Configure Supabase Nodes: Assign your Supabase credential to all Supabase nodes. Ensure table names (e.g., companies, competitors) match your schema. Configure Google Drive Nodes: Assign your Google Drive credential to the Google Drive2 and save to Google Drive1 nodes. Select the correct Folder ID. Activate Workflow: Turn on the workflow and open the Webhook URL in the “On form submission” node to access the form. --- What It Does Form Trigger Captures user input: “Website URL” and “Scraping Type” (basic or deep). Scraping Router A Switch node routes the flow: Deep Scraping → AI-based MCP Firecrawler agent. Basic Scraping → Crawlee node. Deep Scraping (Firecrawl AI Agent) Uses Firecrawl and Tavily Web Search. Extracts a detailed JSON profile: mission, services, contacts, SEO keywords, etc. Basic Scraping (Crawlee) Uses Crawl and Scrape node to collect raw text. A Mistral-based AI extractor structures the data into JSON. Data Storage Stores structured data in Supabase tables (companies, company_basicprofiles). Archives a full JSON backup to Google Drive. Automated Competitor Analysis Runs after a deep scrape. Uses Tavily web search to find competitors (e.g., from Crunchbase). Saves competitor data to Supabase, linked by company_id. --- Who's It For Sales & Marketing Teams: Enrich leads with deep company info. Market Researchers: Build structured, searchable company databases. B2B Data Providers: Automate company intelligence collection. Developers: Use as a base for RAG or enrichment pipelines. --- Requirements n8n instance (self-hosted or cloud) Supabase Account: With tables like companies, competitors, social_links, etc. Mistral AI API Key Google Drive Credentials Tavily AI API Key (Optional) Custom Nodes: n8n-nodes-crawl-and-scrape --- How It Works Flow Summary Form Trigger: Captures “Website URL” and “Scraping Type”. Switch Node: deep → MCP Firecrawler (AI Agent). basic → Crawl and Scrape node. Scraping & Extraction: Deep path: Firecrawler → JSON structure. Basic path: Crawlee → Mistral extractor → JSON. Storage: Save JSON to Supabase. Archive in Google Drive. Competitor Analysis (Deep Only): Finds competitors via Tavily. Saves to Supabase competitors table. End: Finishes with a No Operation node. --- How To Set Up Import workflow JSON. Install community nodes (especially n8n-nodes-crawl-and-scrape from npm). Configure credentials (Supabase, Mistral AI, Google Drive). Add your Tavily API key. Connect Supabase and Drive nodes properly. Fix disconnected “basic” path if needed. Activate workflow. Test via the webhook form URL. --- How To Customize Change LLMs: Swap Mistral for OpenAI or Claude. Edit Scraper Prompts: Modify system prompts in AI agent nodes. Change Extraction Schema: Update JSON Schema in extractor nodes. Fix Relational Tables: Add Items node before Supabase inserts for arrays (social links, keywords). Enhance Automation: Add email/slack notifications, or replace form trigger with a Google Sheets trigger. --- Add-ons Automated Trigger: Run on new sheet rows. Notifications: Email or Slack alerts after completion. RAG Integration: Use the Supabase database as a chatbot knowledge source. --- Use Case Examples Sales Lead Enrichment: Instantly get company + competitor data from a URL. Market Research: Collect and compare companies in a niche. B2B Database Creation: Build a proprietary company dataset. --- WORKFLOW IMAGE --- Troubleshooting Guide | Issue | Possible Cause | Solution | |-------|----------------|-----------| | Form Trigger 404 | Workflow not active | Activate the workflow | | Web Search Tool fails | Missing Tavily API key | Replace the placeholder key | | FIRECRAWLER / find competitor fails | Missing MCP node | Install n8n-nodes-mcp | | Basic scrape does nothing | Switch node path disconnected | Reconnect “basic” output | | Supabase node error | Wrong table/column names | Match schema exactly | --- Need Help or More Workflows? Want to customize this workflow for your business or integrate it with your existing tools? Our team at Digital Biz Tech can tailor it precisely to your use case from automation logic to AI-powered enhancements. Contact: shilpa.raju@digitalbiz.tech For more such offerings, visit us: https://www.digitalbiz.tech ---