🏤 Scrapping of European Union events with Google Sheets

Tags: Scrapping, Events, European Union, Networking

Context

Hey! I’m Samir , a Supply Chain Engineer and Data Scientist from Paris, and the founder of LogiGreen Consulting.

We use AI, automation, and data to support sustainable and data-driven operations across all types of organizations.

This workflow is part of our networking strategy (as a business) to track official EU events that may relate to topics we cover.

> Want to stay ahead of critical EU meetings and events without checking the website every day?

This n8n workflow automatically scrapes the EU’s official event portal and logs the latest entries with clean metadata including date, location, category, and link.

📬 For collaborations, feel free to connect with me on LinkedIn

Who is this template for?

This workflow is useful for:

- Policy & public affairs teams following institutional activities

- Sustainability teams watching for relevant climate-related summits

- NGOs and researchers interested in event calendars

- Data teams building dashboards on public event trends

What does it do?

This n8n workflow:

- 🌐 Scrapes the EU events portal for new meetings and conferences

- 📅 Extracts event metadata (title, date, location, type, and link)

- 🔁 Handles pagination across multiple pages

- 🚫 Checks for duplicates already stored

- 📊 Saves new records into a connected Google Sheet

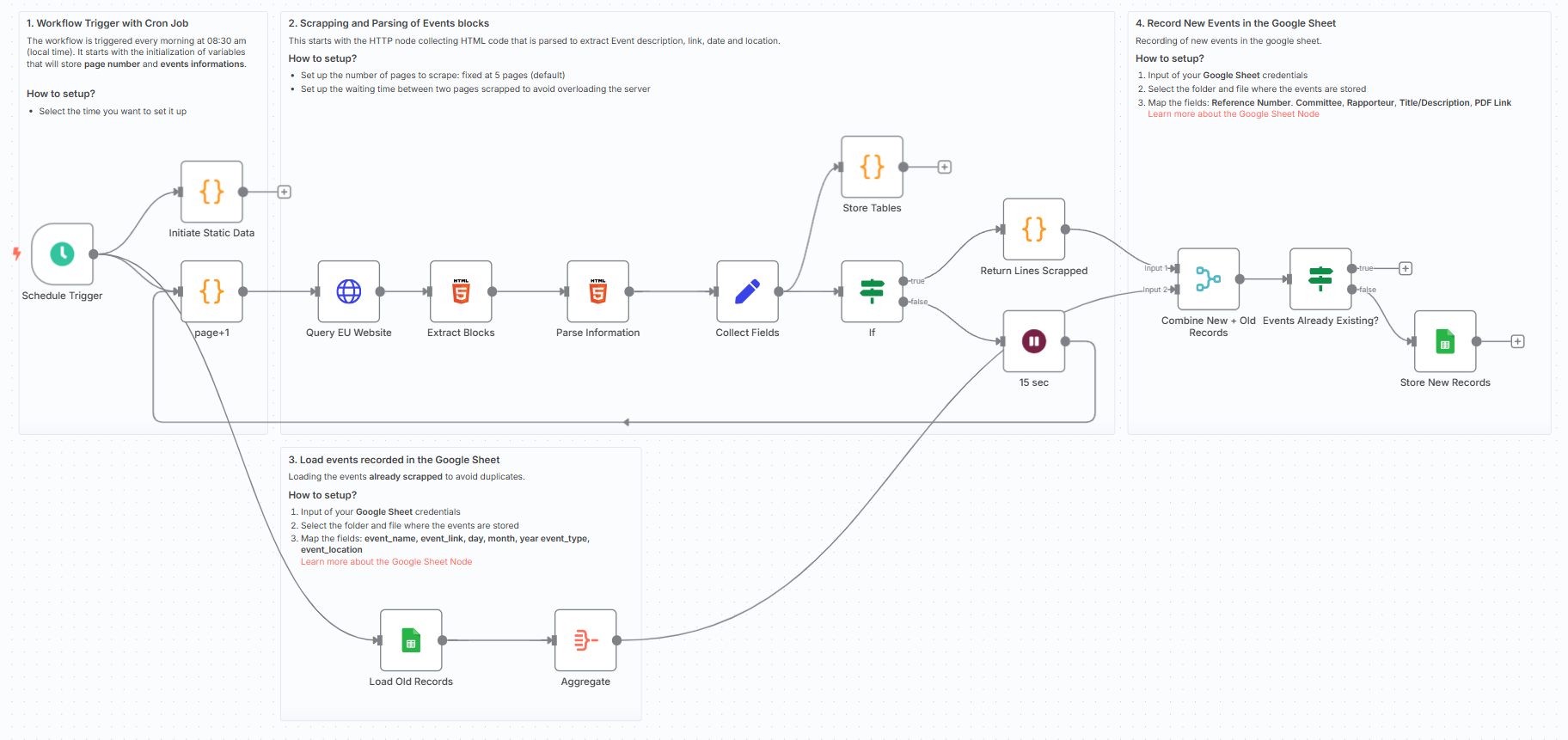

How it works

- Triggered daily via cron

- HTTP node loads the event listing HTML

- Extract HTML blocks for each event article

- Parse event name, link, type, location, and full date

- Concatenate and clean dates for easy tracking

- Store non-duplicate entries in Google Sheets

The workflow uses static data to track pagination and ensure only new events are stored, making it ideal for building up a clean dataset over time.

What do I need to get started?

You’ll need:

- A Google Sheet connected to your n8n instance

- No code or AI tools needed — just n8n and this template

Follow the Guide!

Sticky notes are included directly inside the workflow to guide you step-by-step through setup and customisation.

Notes

- This is ideal for analysts and consultants who want clean, structured data from the EU portal

- You can add filtering, email alerts, or AI classifiers later

This workflow was built using n8n version 1.93.0

Submitted: June 1, 2025

n8n Workflow: European Union Events Scraper

This n8n workflow is designed to scrape European Union event data from a website and process it. While the directory name suggests integration with Google Sheets, the provided JSON only defines the scraping and data manipulation parts of the workflow, without any actual Google Sheets node configured.

What it does

This workflow focuses on extracting and transforming data, likely from a web page:

- Initiates on Schedule: The workflow is triggered at predefined intervals.

- Performs HTTP Request: It makes an HTTP request, presumably to a European Union events page, to fetch the raw HTML content.

- Extracts HTML Data: It then processes the received HTML to extract specific data elements.

- Transforms Data: The extracted data is then processed and transformed using a Code node, likely for cleaning, reformatting, or further parsing.

- Aggregates Data: The transformed data is aggregated, possibly combining multiple items into a single structure or consolidating information.

- Conditional Logic: The workflow includes an 'If' node, indicating that it applies conditional logic to the processed data, potentially routing it based on certain criteria.

- Delays Execution: A 'Wait' node is present, suggesting that there might be a deliberate pause in the workflow's execution, perhaps to respect rate limits or for timing purposes.

- Edits Fields: The 'Edit Fields (Set)' node is used to manipulate or add fields to the data items, preparing them for subsequent steps.

Prerequisites/Requirements

- n8n Instance: A running instance of n8n to import and execute the workflow.

- Target Website: Access to the European Union events website that the HTTP Request node is configured to scrape.

- Custom Code: Understanding and potentially modification of the JavaScript code within the "Code" node to match specific scraping and transformation needs.

Setup/Usage

- Import the Workflow: Import the provided JSON into your n8n instance.

- Configure HTTP Request:

- Open the "HTTP Request" node.

- Ensure the URL and any other request parameters (headers, authentication, etc.) are correctly configured to target the European Union events website you intend to scrape.

- Configure HTML Node:

- Open the "HTML" node.

- Adjust the CSS selectors or other extraction rules to accurately pull the desired event data from the HTML content received from the HTTP Request.

- Review and Customize Code Node:

- Open the "Code" node.

- Review the JavaScript code to understand how it transforms the data. Modify it as needed to fit your specific data processing requirements.

- Configure If Node:

- Open the "If" node.

- Define the conditions based on which you want to route or filter the event data.

- Configure Schedule Trigger:

- Open the "Schedule Trigger" node.

- Set the desired interval for how often the workflow should run (e.g., daily, weekly).

- Activate the Workflow: Once configured, activate the workflow in n8n.

Note: The workflow currently scrapes and processes data but does not store it anywhere (e.g., Google Sheets, database). To save the scraped data, you would need to connect additional nodes (like Google Sheets, database nodes, or file storage nodes) after the "Aggregate" or "Edit Fields" node, depending on your desired output format and destination.

Related Templates

Generate song lyrics and music from text prompts using OpenAI and Fal.ai Minimax

Spark your creativity instantly in any chat—turn a simple prompt like "heartbreak ballad" into original, full-length lyrics and a professional AI-generated music track, all without leaving your conversation. 📋 What This Template Does This chat-triggered workflow harnesses AI to generate detailed, genre-matched song lyrics (at least 600 characters) from user messages, then queues them for music synthesis via Fal.ai's minimax-music model. It polls asynchronously until the track is ready, delivering lyrics and audio URL back in chat. Crafts original, structured lyrics with verses, choruses, and bridges using OpenAI Submits to Fal.ai for melody, instrumentation, and vocals aligned to the style Handles long-running generations with smart looping and status checks Returns complete song package (lyrics + audio link) for seamless sharing 🔧 Prerequisites n8n account (self-hosted or cloud with chat integration enabled) OpenAI account with API access for GPT models Fal.ai account for AI music generation 🔑 Required Credentials OpenAI API Setup Go to platform.openai.com → API keys (sidebar) Click "Create new secret key" → Name it (e.g., "n8n Songwriter") Copy the key and add to n8n as "OpenAI API" credential type Test by sending a simple chat completion request Fal.ai HTTP Header Auth Setup Sign up at fal.ai → Dashboard → API Keys Generate a new API key → Copy it In n8n, create "HTTP Header Auth" credential: Name="Fal.ai", Header Name="Authorization", Header Value="Key [Your API Key]" Test with a simple GET to their queue endpoint (e.g., /status) ⚙️ Configuration Steps Import the workflow JSON into your n8n instance Assign OpenAI API credentials to the "OpenAI Chat Model" node Assign Fal.ai HTTP Header Auth to the "Generate Music Track", "Check Generation Status", and "Fetch Final Result" nodes Activate the workflow—chat trigger will appear in your n8n chat interface Test by messaging: "Create an upbeat pop song about road trips" 🎯 Use Cases Content Creators: YouTubers generating custom jingles for videos on the fly, streamlining production from idea to audio export Educators: Music teachers using chat prompts to create era-specific folk tunes for classroom discussions, fostering interactive learning Gift Personalization: Friends crafting anniversary R&B tracks from shared memories via quick chats, delivering emotional audio surprises Artist Brainstorming: Songwriters prototyping hip-hop beats in real-time during sessions, accelerating collaboration and iteration ⚠️ Troubleshooting Invalid JSON from AI Agent: Ensure the system prompt stresses valid JSON; test the agent standalone with a sample query Music Generation Fails (401/403): Verify Fal.ai API key has minimax-music access; check usage quotas in dashboard Status Polling Loops Indefinitely: Bump wait time to 45-60s for complex tracks; inspect fal.ai queue logs for bottlenecks Lyrics Under 600 Characters: Tweak agent prompt to enforce fuller structures like [V1][C][V2][B][C]; verify output length in executions

Automate Dutch Public Procurement Data Collection with TenderNed

TenderNed Public Procurement What This Workflow Does This workflow automates the collection of public procurement data from TenderNed (the official Dutch tender platform). It: Fetches the latest tender publications from the TenderNed API Retrieves detailed information in both XML and JSON formats for each tender Parses and extracts key information like organization names, titles, descriptions, and reference numbers Filters results based on your custom criteria Stores the data in a database for easy querying and analysis Setup Instructions This template comes with sticky notes providing step-by-step instructions in Dutch and various query options you can customize. Prerequisites TenderNed API Access - Register at TenderNed for API credentials Configuration Steps Set up TenderNed credentials: Add HTTP Basic Auth credentials with your TenderNed API username and password Apply these credentials to the three HTTP Request nodes: "Tenderned Publicaties" "Haal XML Details" "Haal JSON Details" Customize filters: Modify the "Filter op ..." node to match your specific requirements Examples: specific organizations, contract values, regions, etc. How It Works Step 1: Trigger The workflow can be triggered either manually for testing or automatically on a daily schedule. Step 2: Fetch Publications Makes an API call to TenderNed to retrieve a list of recent publications (up to 100 per request). Step 3: Process & Split Extracts the tender array from the response and splits it into individual items for processing. Step 4: Fetch Details For each tender, the workflow makes two parallel API calls: XML endpoint - Retrieves the complete tender documentation in XML format JSON endpoint - Fetches metadata including reference numbers and keywords Step 5: Parse & Merge Parses the XML data and merges it with the JSON metadata and batch information into a single data structure. Step 6: Extract Fields Maps the raw API data to clean, structured fields including: Publication ID and date Organization name Tender title and description Reference numbers (kenmerk, TED number) Step 7: Filter Applies your custom filter criteria to focus on relevant tenders only. Step 8: Store Inserts the processed data into your database for storage and future analysis. Customization Tips Modify API Parameters In the "Tenderned Publicaties" node, you can adjust: offset: Starting position for pagination size: Number of results per request (max 100) Add query parameters for date ranges, status filters, etc. Add More Fields Extend the "Splits Alle Velden" node to extract additional fields from the XML/JSON data, such as: Contract value estimates Deadline dates CPV codes (procurement classification) Contact information Integrate Notifications Add a Slack, Email, or Discord node after the filter to get notified about new matching tenders. Incremental Updates Modify the workflow to only fetch new tenders by: Storing the last execution timestamp Adding date filters to the API query Only processing publications newer than the last run Troubleshooting No data returned? Verify your TenderNed API credentials are correct Check that you have setup youre filter proper Need help setting this up or interested in a complete tender analysis solution? Get in touch 🔗 LinkedIn – Wessel Bulte

AI-powered code review with linting, red-marked corrections in Google Sheets & Slack

Advanced Code Review Automation (AI + Lint + Slack) Who’s it for For software engineers, QA teams, and tech leads who want to automate intelligent code reviews with both AI-driven suggestions and rule-based linting — all managed in Google Sheets with instant Slack summaries. How it works This workflow performs a two-layer review system: Lint Check: Runs a lightweight static analysis to find common issues (e.g., use of var, console.log, unbalanced braces). AI Review: Sends valid code to Gemini AI, which provides human-like review feedback with severity classification (Critical, Major, Minor) and visual highlights (red/orange tags). Formatter: Combines lint and AI results, calculating an overall score (0–10). Aggregator: Summarizes results for quick comparison. Google Sheets Writer: Appends results to your review log. Slack Notification: Posts a concise summary (e.g., number of issues and average score) to your team’s channel. How to set up Connect Google Sheets and Slack credentials in n8n. Replace placeholders (<YOURSPREADSHEETID>, <YOURSHEETGIDORNAME>, <YOURSLACKCHANNEL_ID>). Adjust the AI review prompt or lint rules as needed. Activate the workflow — reviews will start automatically whenever new code is added to the sheet. Requirements Google Sheets and Slack integrations enabled A configured AI node (Gemini, OpenAI, or compatible) Proper permissions to write to your target Google Sheet How to customize Add more linting rules (naming conventions, spacing, forbidden APIs) Extend the AI prompt for project-specific guidelines Customize the Slack message formatting Export analytics to a dashboard (e.g., Notion or Data Studio) Why it’s valuable This workflow brings realistic, team-oriented AI-assisted code review to n8n — combining the speed of automated linting with the nuance of human-style feedback. It saves time, improves code quality, and keeps your team’s review history transparent and centralized.